Deep Dive: Everlaw's first defensible GenAI product

Translating complex RAG technology into a trusted legal tool used by 100+ firms.

Result

72% conversion rate

of beta customers transitioned to paid customers, and adopted by 100+ firms post-GA

Problem

The dilemma: Attorneys are stuck between slow manual review and fast AI that risks hallucinations.

Reviewing documents is currently a choice between two bad options:

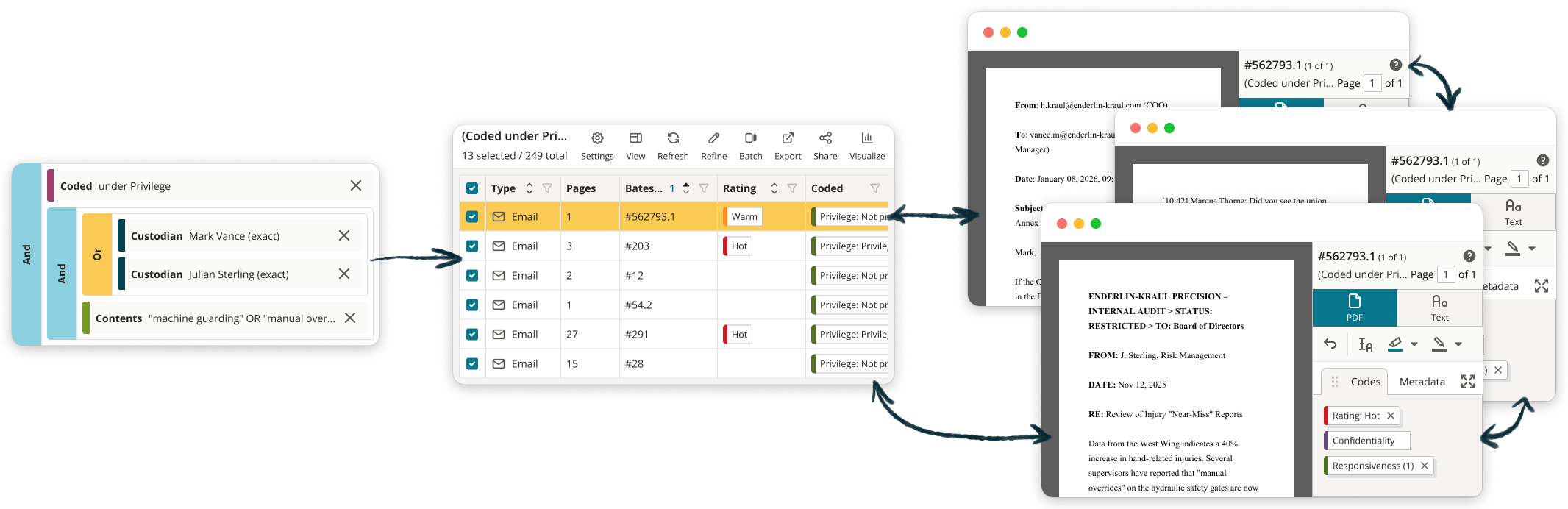

Manual review in Everlaw: build searches, view results, view individual documents

I was the only designer on a team of 3 PMs and 6 engineers. My job: figure out what "trustworthy AI" actually looks like when building a RAG (retrieval augmented generation) tool, and cutting everything else that wasn't essential to proving it.

How might we build a search tool that's fast and trustworthy for attorneys?

Key Decisions

Rejecting the chatbot trend

When I started, everyone assumed we were building a chatbot—leadership, the PMs, and even some engineers. But my early research told a different story: attorneys didn't want exploration, they wanted answers they could defend in court. Instead of mirroring consumer GenAI patterns, I pushed back.

I advocated for discrete Q&A for the MVP, focusing on:

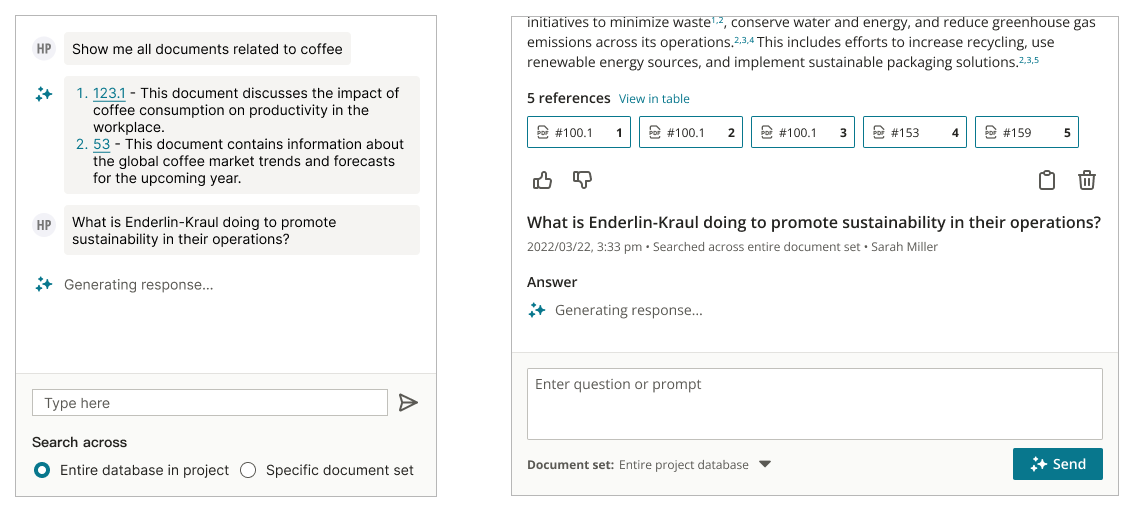

✂️ One question, one answer

In almost every early interview, attorneys said some version of the same thing: "I don't need it to chat with me. I need to know if I can cite this in court." This clarity shaped this foundational design decision, shifting me away from an open-ended conversational interface.

Early explorations of a continuous chat experience

I pushed for a stripped-down model: one question, one response, and no memory of previous turns. It felt counter to consumer GenAI tools, but it meant that each response could be defensible on its own.

Getting buy-in wasn't easy. I had to challenge leadership's early assumptions about a conversational interface. In the end, this decision reinforced user trust in the system and set clear expectations for users to frame purposeful questions.

Individual question and answer units

👩🏻💻 A workspace for verification, not interruption

Reviewing facts requires constant cross-checking between documents, results, and citations. I prototyped an inline experience at first—AI responses floating to the side of the user's window—but the inline experience felt disruptive. Attorneys would have to read the claim, verify the source document, then lose context of the page. The inline design actively made verification harder.

A document window layered on top of Deep Dive layered on top of the platform

I designed Deep Dive as its own full-page workspace so attorneys could focus on the generated response and citations, allowing them to validate facts without losing sight of the original question or response.

Attorneys had to intentionally navigate to Deep Dive, and this friction signaled that this tool was a place for evidence review and verification, not casual assistance.

Full-page workspace design

📜 No claims without citations

In legal work, an AI-generated insight without sources is not evidence—it's a liability.

Every claim in a Deep Dive response is directly followed by links to its sources, allowing attorneys to immediately verify claims in the source documents. I also added a resources table showing every document that informed the response, so attorneys could see the full evidence list at a glance.

Citation-anchored responses

Impact

From beta to 100+ firms

conversion rate of beta customers transitioned to paid customers

firms adopted Deep Dive post-GA

During the closed beta program, I ran 25+ onboarding sessions alongside my PM. I wanted to see firsthand where attorneys hesitated, what they trusted, and what made them skeptical. This feedback shaped the final design, from how citations were formatted to how we showed users the AI was working.

Following GA, Deep Dive was adopted by over 100 firms, with customers using it in early case assessment to quickly orient themselves in large document sets. Customers mentioned significant time savings compared to manual review.

"I got a better idea of what's going on in this case in 5 minutes than an attorney telling me. I loved it."— Customer during a live virtual demo of Deep Dive

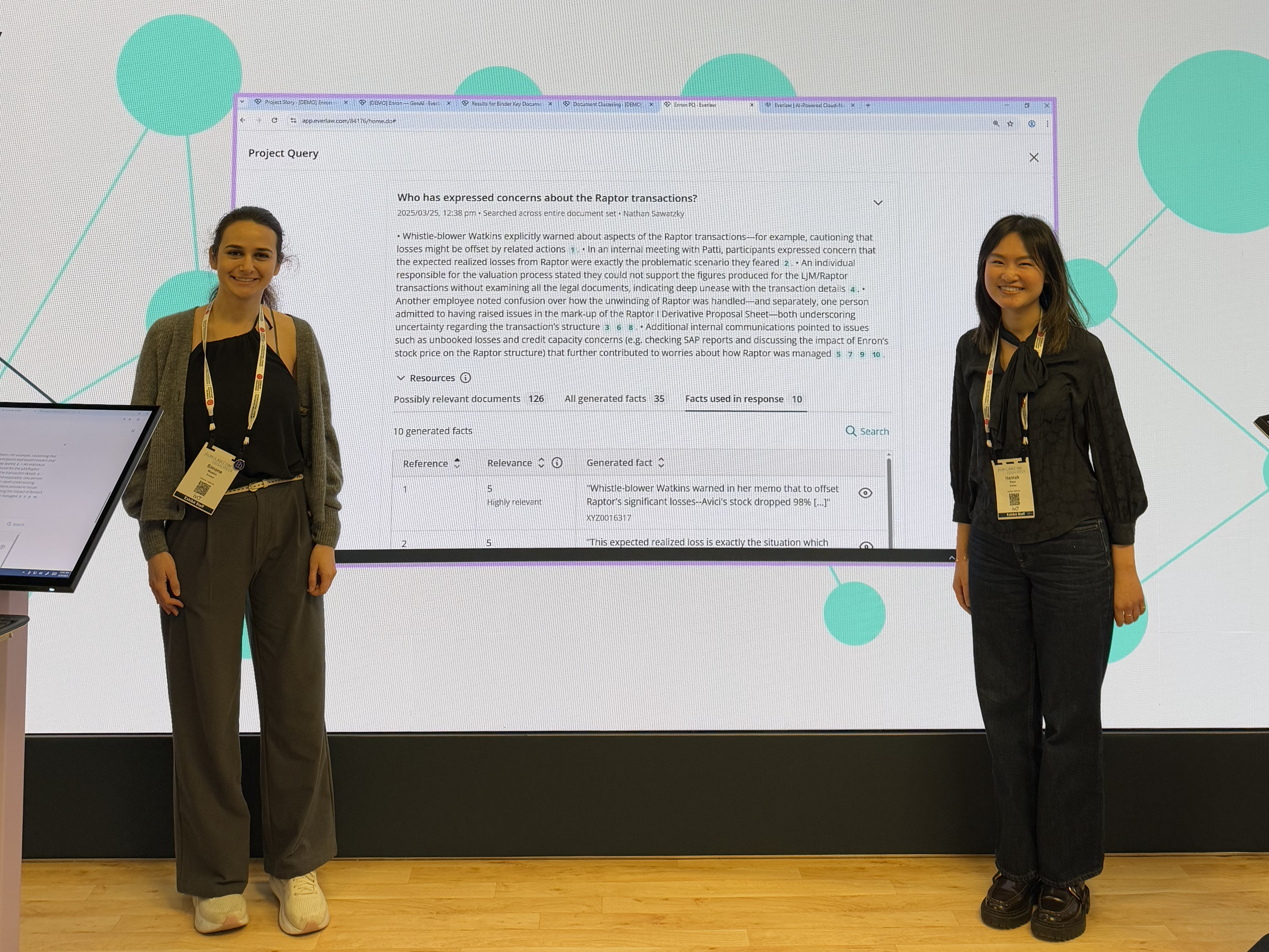

Simone Wasbin (PM) and I presenting Deep Dive at LegalWeek New York 2025 ↗

Beyond the MVP

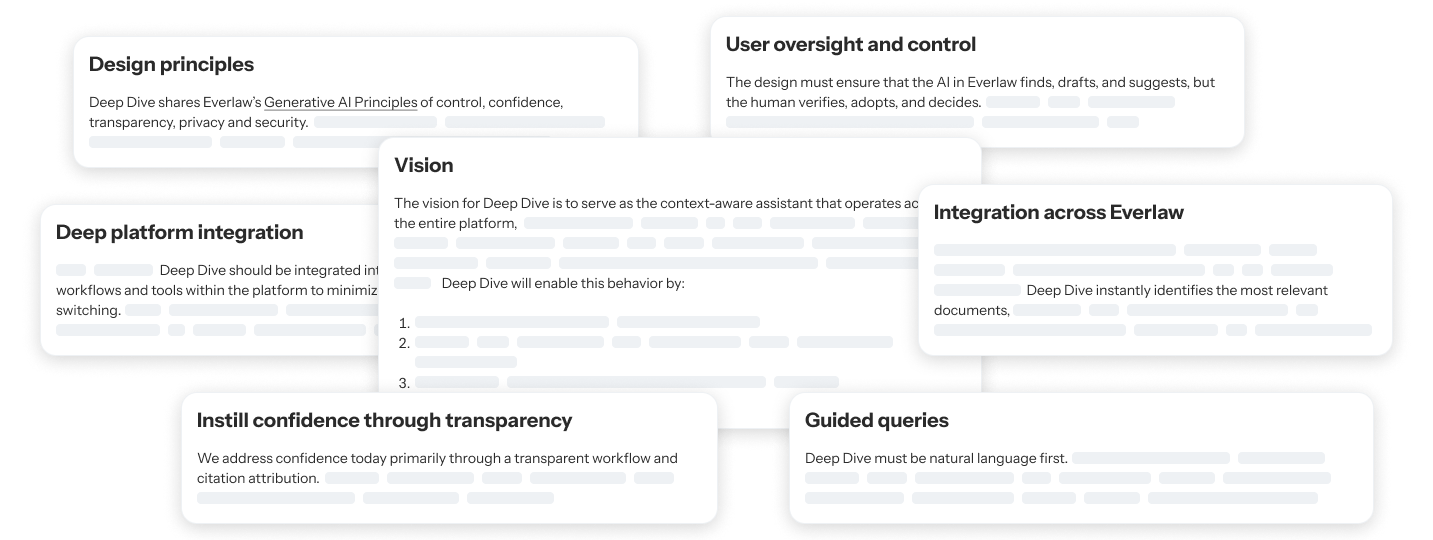

Leading Everlaw's GenAI design direction

The success of the MVP allowed me to shift from designing individual Deep Dive features to organization-wide leadership. Instead of just designing features, I was defining how Everlaw should think about GenAI design.

Collaborating with other designers, I wrote a design principles document that governs all AI features we build, influencing how future GenAI features are scoped, designed, and shipped.

Learnings

What designing high-stakes GenAI taught me

Looking back, there are several decisions I would revisit now that both the technology and my approach to AI design have matured.

📦 Define what the AI won't do first

Initially, I kept designing for what the model could do. These broad explorations delayed decision-making, and I learned that when designing for GenAI, defining what a system won't do is just as important as defining what it will.

🛣️ User trust is built through design

I initially delayed follow up questions to protect the defensibility of the responses. However, I now understand that user trust in products is built over time and can be guided through design. I would explore constrained follow ups (pre-defined, verifiable prompts) sooner, partnering more closely with engineering to understand the scope.

🤔 Think workflows, not features

Deep Dive was originally built to just find the answers, but legal work is about using the insights.

I would prioritize designing structured outputs (e.g. tables, formatted facts) earlier in the roadmap. This would allow insights from Deep Dive to flow directly into existing review and collaboration workflows.